Autonomous AI Tutors. Humanlike Learning, Without the Overhead.

From any question to a reproducible, evidence-based answer—with provenance, bias checks, and one-click hand-offs to your tools.

From any question to a reproducible, evidence-based answer—with provenance, bias checks, and one-click hand-offs to your tools.

Plans and runs reproducible research

Executes roles, maintains snapshots

Individual research results and status

Hashes, inputs and outputs

Sign-off, citations, provenance history

Reuseable findings and procedures

SuperLabs orchestrates a multi-agent research team that’s tuned with vertical AI adapters for your domain. Agents plan, debate, and execute protocols; vertical adapters provide tax-specific schemas, rules, and sources—so results are reproducible and defensible.

Question (Clarifier): “Which SST exemptions apply to digital services for SMEs in Malaysia for FY2025?”

Scout: Retrieve MOF/LHDN/SST circulars, budget speeches, and gazettes; collect prior interpretations.

Hypothesizer: Frame testable hypotheses (e.g., “SME digital SaaS with local consumption qualifies for X exemption under Section Y”).

Methodologist: Build a protocol: source list, citation validity checks, applicability tests (entity size, place of supply), and stop-rules.

Experimentalist/Causalist: Run rule evaluators against example invoices and entity profiles; check confounders (cross-border, reverse charge).

Critic/Replicator: Red-team assumptions (edge cases like marketplace facilitators) and replicate with alternate sources.

Malaysia Taxation (worked example)

Validated

Question (Clarifier): “Which SST exemptions apply to digital services for SMEs in Malaysia for FY2025?”

Received: 78lg&I54hf

From any question to a reproducible, evidence-based answer -with provenance, bias checks and one-click hands-off to your tools.

SuperLabs interprets intent and assembles a protocol; the Orchestrator assigns agents by role.

Provenance by default

Every step hashed and linked

Versioned everything

Data, protocols, features and claims

Bias & safety gates

Agents red-team assumptions before publish

Environments

Dev → Stage → Prod with promotion gates

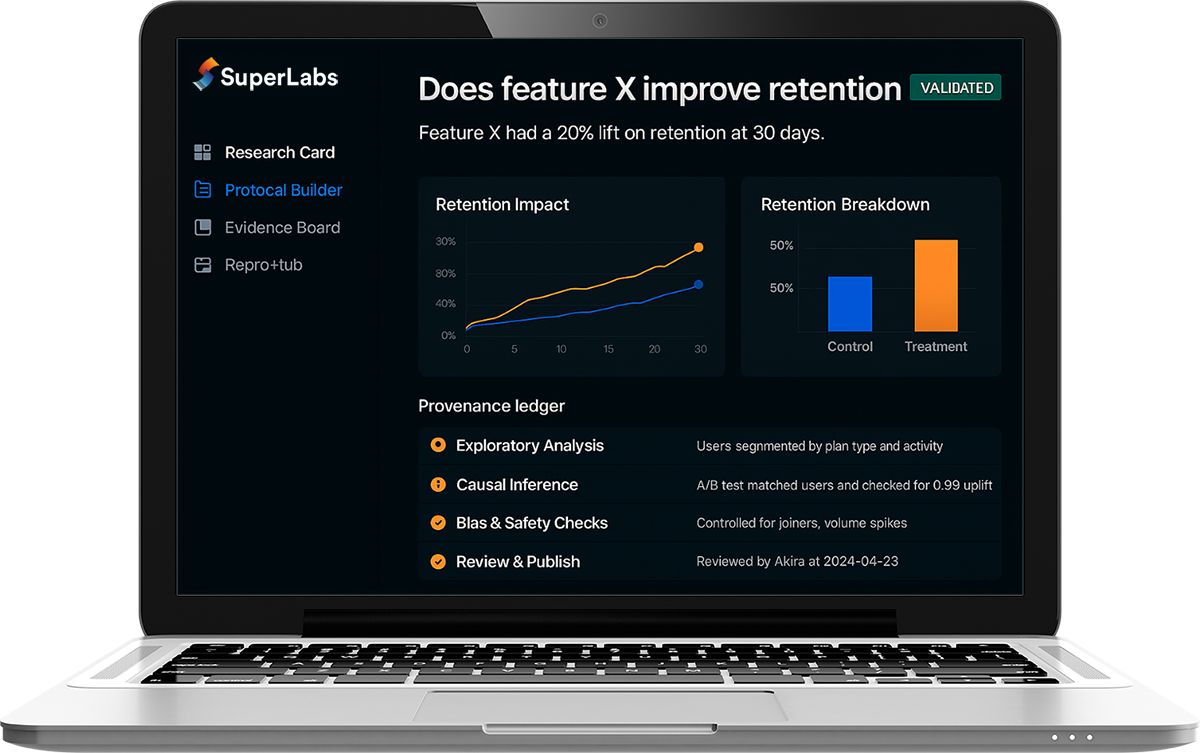

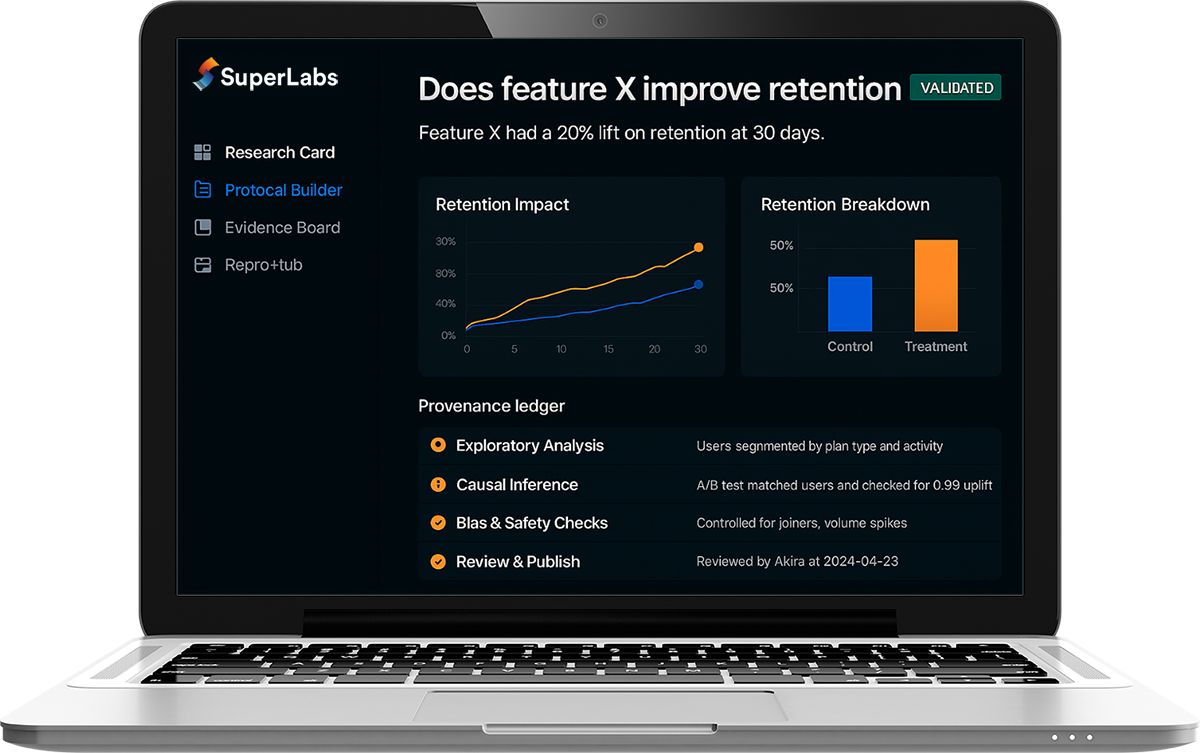

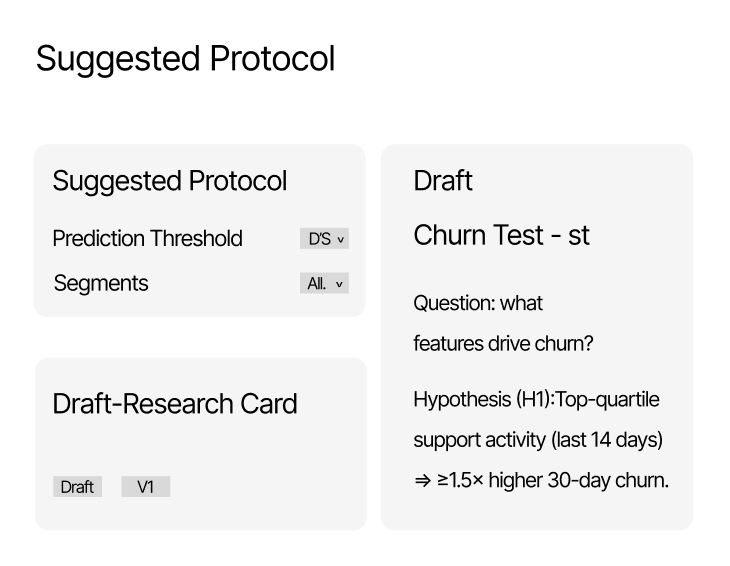

Modules you will see

Question, hypothesis, claim, linked evidence

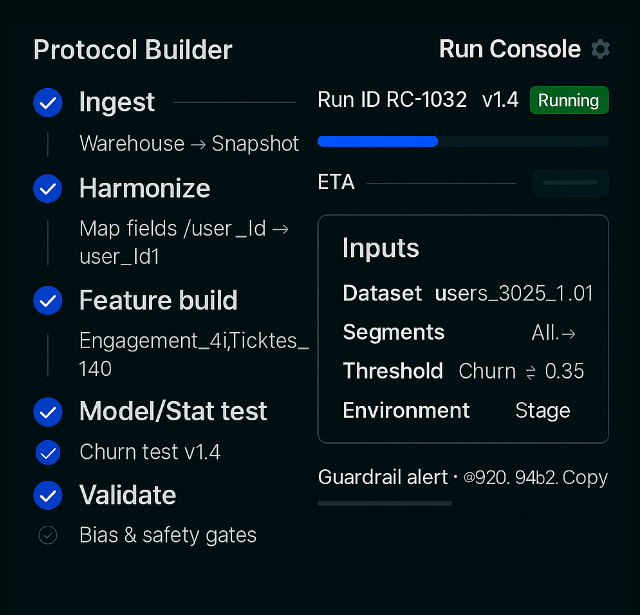

Agents execute experiments-as-code with snapshots, retries, and guardrails.

Agents run steps

steps end-to-end with resource limits, timeouts, and parallelism.

Inputs locked

(data + params snapshot) for exact reproducibility.

Scheduling & retries

with jitter/backoff; resume from failed step.

Safeguards

PII rules, leakage checks, and bias gates before publish.

Provenance ledger

hash every step; link to the Research Card.

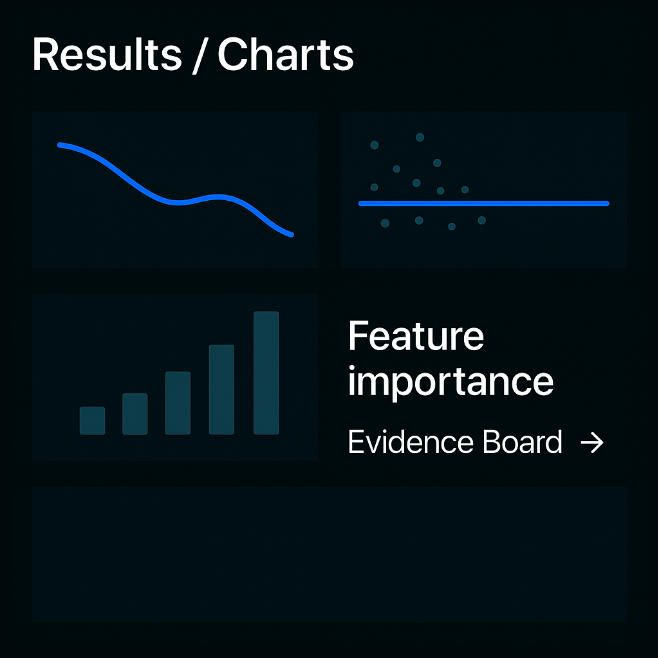

Charts + correlations linked to the Evidence Board; confidence and assumptions are explicit.

Charts & summeries

Evidence links

Confidence & assumptions

Export & share

Variants & cohorts

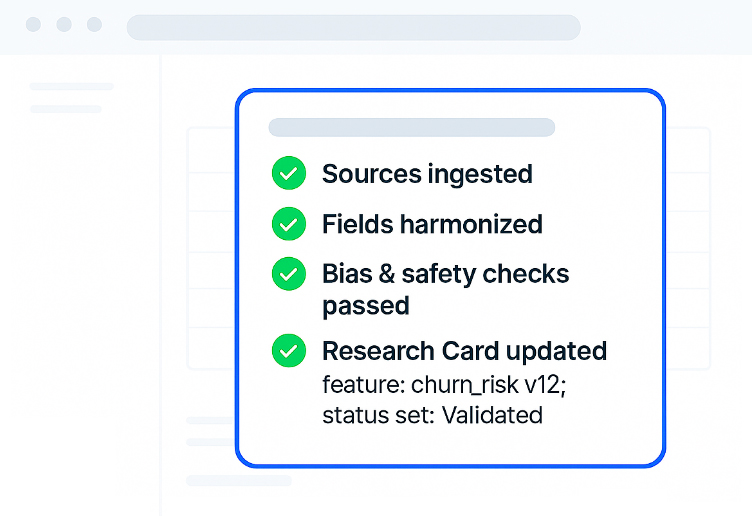

Bias & safety gates pass; provenance chain complete; status set (Exploratory → …).

Bias & safely gates

Provenance hasing

Epistemic status

Repro pack

Approval & sync

Bias checks passed

No leakage

Provenance ledger complete

Status set

Approvals recorded

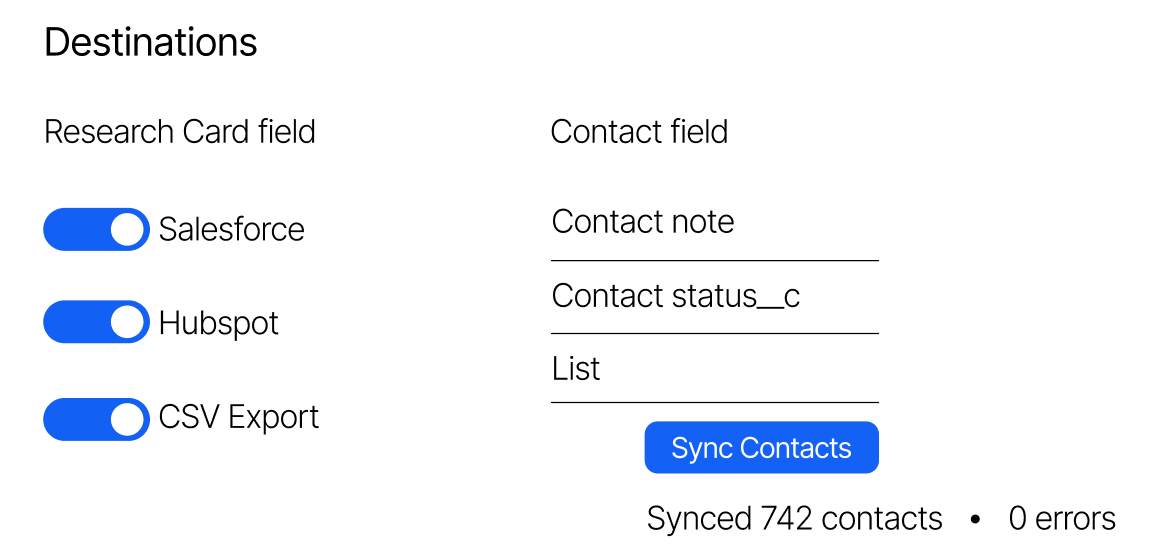

Publish Research Cards/Dossiers/Repro Packs; APIs/webhooks handle downstream updates with optional human review.

Continuous ingestion from documents, data lakes, and the web.

From warehouses and ELNs/LIMS to CRMs, wikis, and chat.

Every Research Card and protocol step is hashed, versioned, and auditable.

Hands-on help across time zones.

How does Evidence Harmonization work?

Can I review/override field mappings?

What gets synced back to my tools?

Turn scattered evidence into confident action. SuperLabs harmonizes sources, runs reproducible protocols in plain language, and syncs validated outcomes back to the tools you already use.

Every step is hashed and versioned—nothing opaque.

Clear labels (Exploratory → Validated → Replicated) on every claim.

Agents red-team sources and assumptions before results ship.

Granular roles, review trails, and air-gapped workflows when required.

SuperLabs turns messy data into traceable, bias-checked results with explicit epistemic status, ready to ship to your tools.